AI + Crypto

Artificial intelligence is breaking out of the lab and into open markets. At the same time, blockchains are evolving beyond finance to coordinate scarce digital resources like compute, bandwidth, and data. AI crypto tokens sit at this intersection, turning AI capabilities into programmable, tradeable, and permissionless services. In this guide, we map the landscape, show how tokens power decentralized AI economies, and explain how to evaluate real utility in a space full of hype.

Unlike closed AI platforms, decentralized intelligence lets anyone provide or consume AI resources GPUs, models, datasets, or even autonomous agents using shared protocols and incentives. The promise: more open access to compute, lower costs, and markets where the best models win on merit, not walled-garden distribution. AI crypto tokens are the coordination layer that makes it all work: they meter usage, bootstrap supply, reward quality, and secure the networks that deliver intelligence on demand.

What you’ll learn

The core categories of AI-native networks

How token designs (emissions, fees, staking) affect utility

Metrics and frameworks to compare projects

Real examples of decentralized AI in production

What are AI crypto tokens?

AI crypto tokens are digital assets that incentivize and govern decentralized AI networks. They reward nodes for useful work (like rendering, training, or inference), pay for query or compute usage, and often participate in protocol governance. This isn’t just “AI-themed” branding—many networks already settle real workloads and fees on-chain (e.g., GPU rendering, video AI, indexing). For instance, Render Network routes GPU jobs, while Akash connects buyers and sellers of cloud GPUs at market rates; both rely on their native tokens to meter payments and align participants.

How AI crypto tokens power decentralized intelligence

At a high level, decentralized AI works like a marketplace.

Supply

Providers contribute scarce resources (GPUs, storage, bandwidth, labeled data, model endpoints).

Demand

Builders or agents buy those resources to render images, train models, run inference, stream video, or query data.

Settlement & Incentives

Tokens price the work, secure the network, and reward quality (often via staking, slashing, or reputation).

Because markets are open, prices can be more competitive. Akash has showcased headline GPU hour rates well below centralized clouds for comparable cards one example page listed H200 pricing far under Web2 providers illustrating how permissionless supply discovery can compress margins.

Token categories & leading projects (2025)

Compute/GPU markets

Render (RNDR): Decentralized GPU rendering and AI workloads; the RNDR token pays node operators for completed jobs. Creators rent idle GPU power for training or generative pipelines without vendor lock-in.

Akash (AKT): A decentralized cloud marketplace where buyers lease GPU capacity; AKT coordinates payments and security. Their public materials highlight competitive pricing versus major clouds and growing AI-specific tooling. Akash Network+1

io.net (IO): A DePIN network aggregating idle GPUs; its Series A (2024) underlined investor demand for decentralized AI compute

Model & inference networks

Bittensor (TAO): An open marketplace where subnets compete to provide useful AI services; emissions and rewards align incentives for quality contributions. Its first halving is projected around December 10–12, 2025 (supply-based, so date can shift), reducing daily issuance from ~7,200 to ~3,600 TAO.

Golem (GLM): General compute marketplace with an AI focus via Modelserve for scalable inference across consumer and pro GPUs.

Data/knowledge & agents

Artificial Superintelligence (ASI): In 2024, Fetch.ai, SingularityNET, and Ocean initiated a token merger to ASI to pool agents, data, and model ecosystems. This consolidation aims at cross-domain AI use cases under a single economic umbrella.

The Graph (GRT): Indexes on-chain data; AI agents and apps pay to query subgraphs critical for context-rich, multi-chain automation. Recent reports detail network roles and incentives.

Media & real-time AI

Livepeer (LPT): Decentralized video compute enabling AI-enhanced streaming, understanding, and generation. Messari reports showed 52M minutes transcoded in Q1 2025 (+49% QoQ) and continued growth in AI-native fees into Q2.

Together, these networks demonstrate that AI crypto tokens aren’t just speculative wrappers they are payment rails and incentive mechanisms for real workloads across compute, data, and media.

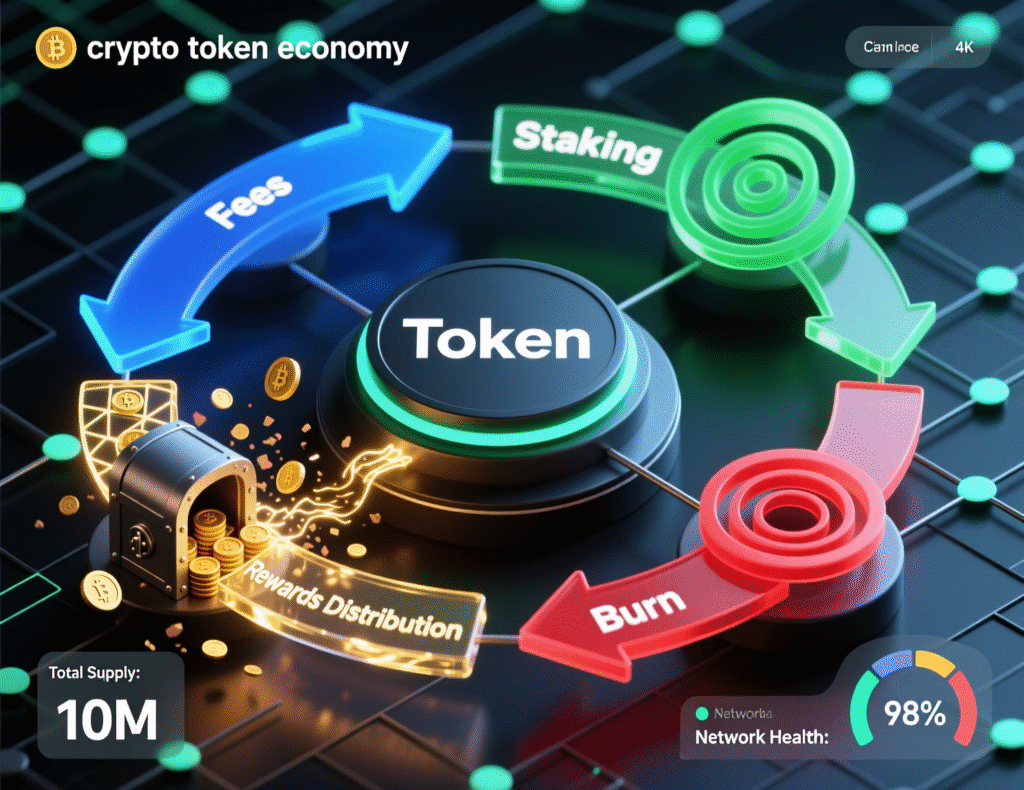

Tokenomics that matter for decentralized AI

The value of AI crypto tokens comes from usage + security + governance, not slogans. Focus on:

Emission schedule & halvings

Predict how supply evolves. For example, TAO’s halving cuts daily issuance in half, a fundamental shift in miner economics and potential sell pressure. Because Bittensor ties halving to circulating supply (and recycling), exact timing can shift—plan for a date range, not a single day.

Fee capture

Which actions pay fees (rendering frames, GPU hours, queries, inference calls)? Are fees burned, distributed to stakers, or accruing to treasuries?Staking & slashing

Does staking improve work quality (e.g., better model outputs), or is it purely financial?Demand elasticity

Will lower prices bring more usage, or are there product bottlenecks (SDKs, dev experience, compliance)?

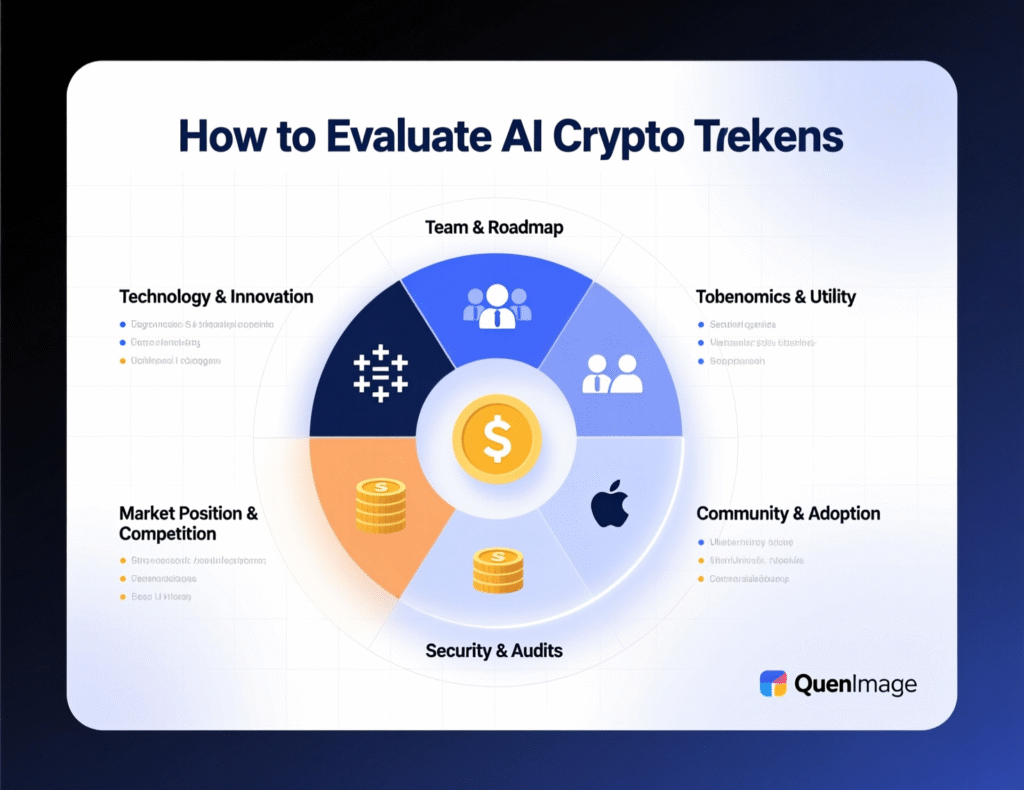

A practical framework to compare networks

Real usage

On-chain fees, minutes transcoded, GPU hours, or queries served (e.g., Livepeer’s minutes and AI-native fee growth).

Unit economics

$/GPU-hour vs centralized clouds; are decentralized markets consistently cheaper (see Akash examples)?

Supply depth

Diversity of GPU classes (consumer vs data-center), global geographies, and availability SLAs.

Developer surface area

SDKs, templates, agent frameworks, and documentation quality (Render/Livepeer/Golem provide concrete SDKs and job pipelines).

Roadmap credibility

Are milestones shipped on time (e.g., halving readiness, GPU integrations, subnet SDKs)?

Security & governance

Slashing conditions, audits, and upgrade safety.

Risks and realities of AI crypto tokens

AI crypto tokens face market volatility, execution risk, and regulatory uncertainty. Some “AI” coins have minimal linkage to real workloads—filter by usage metrics and fee flows. Timing narratives (like “pre-halving runs”) can be seductive but unreliable; treat them as secondary to fundamentals. Where possible, verify claims with primary sources: network dashboards, code repos, or independent research (e.g., Messari, CoinDesk).

Case studies: decentralized AI in production

Case study 1 GPU rendering & gen-AI on Render

A motion-graphics studio splits a generative video pipeline across multiple RNDR nodes, paying per job result with RNDR. Render’s marketplace lets them tap idle GPUs without long-term contracts, making prototyping cheaper and faster while scaling to heavy bursts during production.

Case study 2 Training/inference on open compute (Akash & Golem)

A startup fine-tunes speech and diffusion models across heterogeneous GPUs, scheduling training on Akash for H200-class tasks and serving smaller inferences via Golem’s Modelserve endpoints on consumer GPUs. The mix reduces cost while meeting latency targets for different models.

Getting started: a step-by-step approach

Define the workload (render, training, inference, video, data queries).

Select networks by category (compute, data, media).

Check real usage: fees, minutes, GPU hours, or queries.

Assess token design: emissions, burn, staking rewards, governance.

Run a small pilot and measure cost/latency vs centralized alternatives.

Scale & automate with SDKs, agent frameworks, and alerting.

Final Words

Open AI markets reward real work: compute delivered, frames rendered, queries answered. That’s why AI crypto tokens matter they turn AI from a gated platform into a permissionless economy. The winners will be the networks that (1) serve real workloads, (2) align incentives across providers and users, and (3) ship developer-centric tooling. If you build or invest here, bias toward verifiable usage and transparent economics. Start small, measure everything, and grow into the networks where value not narratives drives demand.

Call to action

Want a practical shortlist tailored to your use case (rendering, training, video, or agents)? Share your workload and budget, and we’ll map the best networks, SDKs, and token strategies for you.

FAQs

Q1) How do AI crypto tokens work?

A : They coordinate open markets for AI resources. Providers stake or register nodes, complete jobs (rendering, inference, indexing), and receive tokens; consumers pay tokens for usage. Designs vary: some burn fees, others redistribute to stakers or treasuries.

Schema expander: Mechanism: staking + metered fees; Benefits: permissionless access, competitive pricing.

Q2) How can I evaluate AI crypto tokens for real utility?

A : Track on-chain fees, jobs/minutes processed, GPU-hour liquidity, SDK quality, and post-emission sustainability. Compare $/GPU-hour or $/query vs centralized clouds, and watch roadmap delivery.

Schema expander: Metrics include demand-side fees, unique providers, utilization, latency.

Q3) How do I start using decentralized GPUs?

A : Pick a network (e.g., Akash or Render), create a wallet, fund it, select a provider/queue, and submit a job via CLI or console. Begin with a small pilot to validate throughput and reliability.

Schema expander: Steps: fund → select node → submit job → verify output → scale.

Q4) How risky are AI crypto tokens?

A : Volatile markets, technical execution risk, and regulatory changes. Reduce risk by focusing on networks with real usage and transparent economics; avoid speculative clones with weak documentation.

Schema expander: Risk types: market, technical, legal; Mitigation: position sizing, due diligence.

Q5) How does the ASI merger affect tokenholders?

A : In 2024 the teams began migrating AGIX/OCEAN/FET into ASI to unify liquidity and developer ecosystems around agents and data. Check official migration guides/exchanges for the latest steps.

Schema expander: Benefit: shared liquidity & roadmap; Action: verify supported wallets/venues.

Q6) How does Bittensor’s halving influence token economics?

A : By cutting daily TAO emissions ~50%, potential sell pressure reduces, but miner/staker rewards drop; network participation and subnet quality become more sensitive to fees and demand. Timing is supply-based and can shift.

Schema expander: Effect: scarcity ↑, rewards ↓; Watch: subnet adoption post-halving.

Q7) What’s the difference between Render and Livepeer?

A : Render focuses on GPU rendering and some AI workloads; Livepeer focuses on video compute (transcoding and AI video processing). Both use tokens (RNDR, LPT) to pay providers, but target different job types.

Schema expander: Render: frames & gen-AI scenes; Livepeer: streaming & real-time AI video.

Q8) How do developers pay for data/knowledge in Web3?

A : Projects like The Graph (GRT) charge per query to access indexed on-chain data; AI agents can use these subgraphs to retrieve state, events, and analytics across chains.

Schema expander: Flow: indexers → subgraphs → query fees → distribution.